From Overfitting to Distributed Authorship: Authorship in the Context of Generative AI Art

He Li

In generative AI art, authorship is shifting from the position of a single creator to a layered form of practice. This essay uses “overfitting” as a way to enter this question. In machine learning, overfitting refers to a model’s tendency to adhere too closely to the specific patterns, biases, and noise within its training data, rather than learning more generalizable rules. In this essay, however, the term is also extended as a critical metaphor for describing how AI-generated images may become overly adapted to training data, platform aesthetics, and the recognizable visual languages of the art system. Through this concept, I argue that AI does not make the author disappear; instead, it relocates authorship into data, selection, correction, curation, and institutional framing.

Marcel Duchamp’s Fountain can serve as a historical starting point for this discussion of authorship. As a readymade, Fountain is important not because Duchamp physically made the object, but because he transformed an existing industrial object into an artistic question through selection, naming, and exhibition context. The work challenged the traditional assumption that the author must be the person who manually produces the artwork. It also shifted authorship from handcraft to concept, choice, and framing. In other words, Fountain had already raised a question that remains central today: if the artist’s work is no longer primarily the making of an object, where does authorship reside?

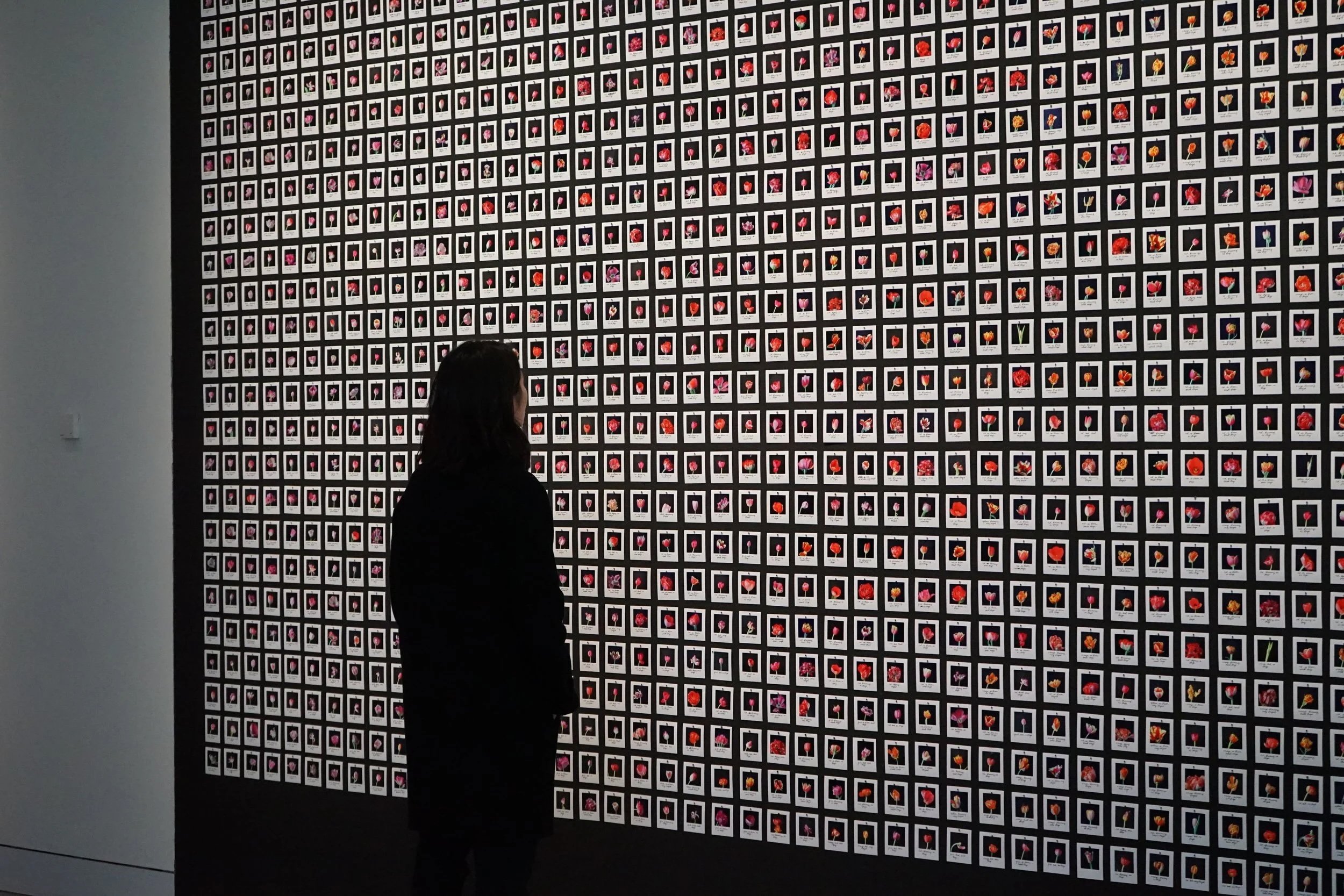

One hundred years later, generative AI art has made this question even more complex. Anna Ridler’s Myriad (Tulips) and Mosaic Virus offer an important example. Compared with public image-generation platforms, Ridler’s authorship is more clearly concentrated in her control over the dataset and conceptual framework. She does not simply use a ready-made image generator. Instead, she constructs a training dataset by photographing, selecting, and manually annotating a large number of tulip images. As a result, although the final visual images are generated through a model, their visual source, classificatory logic, and conceptual structure are largely organized by Ridler herself. Her work does not treat AI as an automatic image-producing tool; rather, it brings dataset construction, annotation, and generated results together into a single artistic system.

Fountain, Marcel Duchamp, 1917/1964, Sculpture, Ceramic, glaze, and paint. Dimensions15 × 19 1/4 × 24 5/8 in

Here, overfitting becomes significant not because it marks a simple technical failure, but because it reveals how strongly the generated images remain tied to Ridler’s own dataset. If the model begins to adhere closely to the specific patterns, colors, categories, and inconsistencies within her tulip images, those traces do not necessarily weaken the work; instead, they make visible the human decisions embedded in the dataset. The image is therefore not an independent creation of the machine, but the result of Ridler’s processes of photographing, selecting, labeling, and conceptually framing her material. Her project can thus be understood as a relatively closed authorial system: the data comes from the artist, the categories are defined by the artist, and the generated results serve her larger framework around tulip mania, Bitcoin speculation, and the value of images. In this case, AI does not dissolve authorship; it relocates it. Ridler’s role is not limited to making the final image directly, but extends to organizing the conditions under which the image is generated: the dataset, the classifications, the training process, the selection of outputs, and the conceptual framework.

However, when we turn to public image-generation platforms that anyone can use, such as Midjourney, ChatGPT’s image-generation tools, Nano Banana, China’s Jimeng(即梦), or other image generators, the situation changes. Not every user has the time, technical knowledge, or resources to train a model that fully belongs to them. Unlike Ridler’s self-constructed dataset, public platforms must respond to complex and constantly changing visual demands. For this reason, they rely on data systems that are larger in scale, more open, and much harder to trace.

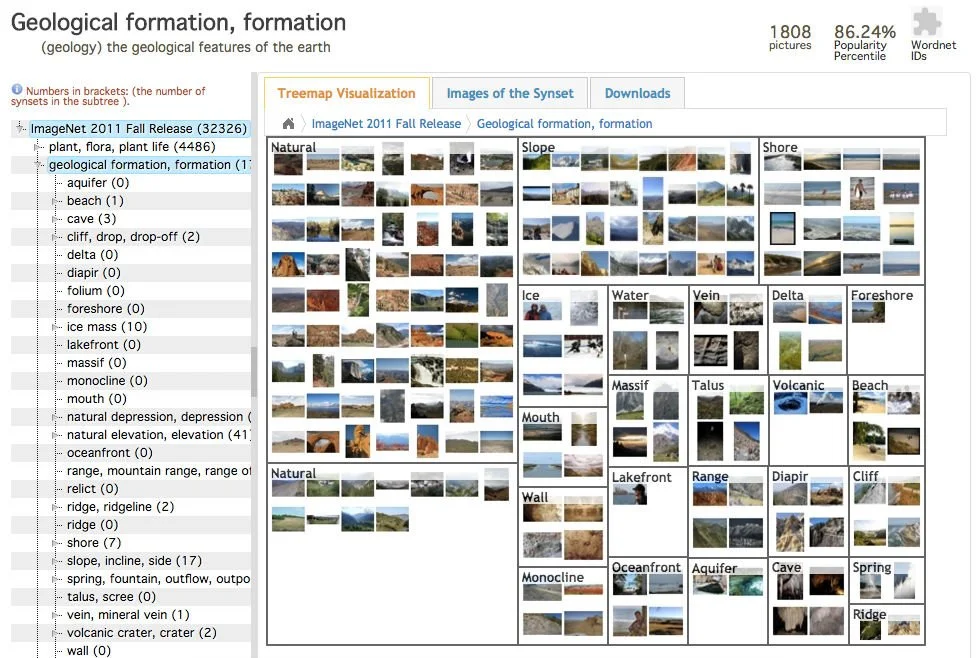

ImageNet website appearance

Early large-scale image classification systems, such as ImageNet, can help us understand this dispersal of authorship. ImageNet is not necessarily the direct foundation of today’s image-generation models, but it offers an important historical example of how machine vision depends on image collection, classification standards, and human annotation labor. From the perspective of production, its visual knowledge is not created by a single author. It is collectively produced by original image makers, online platforms, researchers, crowdworkers, and classification systems. Today’s image-generation models rely less on ImageNet-style manual classification of individual images and more on large-scale image-text pairs, web scraping, automatic filtering, and model-based selection. Yet human labor has not disappeared. It has only moved into less visible positions, such as data cleaning, content moderation, preference feedback, model evaluation, and platform rule-making. AI image generation may appear automated, but it is still built upon hidden labor and accumulated visual culture.

Public AI image generators are therefore based on a structure very different from Ridler’s project. When a user enters a prompt, it may appear that they are “creating” an image. Yet the range of visual possibilities has already been shaped by training data, model architecture, and platform rules. At this point, authorship is no longer concentrated in one artist. It is dispersed among data producers, original image makers, annotators, model engineers, platforms, and users. At the same time, under similar prompt templates, default model aesthetics, and platform recommendation systems, different users’ images can easily begin to resemble one another. A public AI platform is not simply a neutral creative tool. It also produces a recognizable, repeatable, and circulatable visual style.

In this situation, image creators no longer have to make images directly through traditional skills such as painting, photography, or 3D modeling. Instead, they guide AI toward their imagined image by refining prompts, selecting outputs, using reference images, making local modifications, applying post-production, and filtering results. Text becomes an important entry point for creation, even though it is not the only authorial act. The user’s authorship also appears in repeated acts of choosing, correcting, deleting, preserving, and reframing images. The user must constantly balance, select, and correct between the model’s built-in aesthetic frameworks, platform limitations, visual conventions, and their own imagination.

Therefore, from Ridler’s relatively closed data system to the open model structures of public platforms, authorship shifts from concentrated control to distributed organization. In the age of AI-generated images, the author has not disappeared, but it is no longer a stable and singular subject. Instead, authorship becomes a relationship jointly constructed by data, technology, labor, and institutions. Overfitting is not only a technical issue here. It also becomes a theoretical clue for understanding the transformation of authorship. It shows that AI-generated images are never neutral automatic productions; they always adhere to certain data, aesthetics, platform rules, and institutional expectations. The real question is no longer whether AI replaces the author, but how we should understand the author’s position when images are generated by so many systems at once.

He Li

He Li is an artist and photographer working at the intersection of photography, AI-generated images, machine learning, and installation. A graduate of the MFA Photography program at Parsons School of Design, his practice explores memory, bodily perception, technical images, and the machine interpretation of emotion. Through diary writing, code, UV printing, plexiglass, and heat-forming processes, he transforms personal experience into abstract visual and material systems, examining how emotion can be translated, distorted, and reimagined through technological images.